recent/representative papers

Random matrix theory

Kronecker-product random matrices and a matrix least squares problem (w/ Renyuan Ma)

Spectra of the Conjugate Kernel and Neural Tangent Kernel for linear-width neural networks (w/ Zhichao Wang)

AMP algorithms and mean-field models

Dynamical mean-field analysis of adaptive Langevin diffusions: Replica-symmetric fixed point and empirical Bayes (w/ Justin Ko, Bruno Loureiro, Yue M. Lu, Yandi Shen)

Universality of Approximate Message Passing algorithms and tensor networks (w/ Tianhao Wang, Xinyi Zhong)

The replica-symmetric free energy for Ising spin glasses with orthogonally invariant couplings (w/ Yihong Wu)

Approximate Message Passing algorithms for rotationally invariant matrices

Empirical Bayes methods

Gradient flows for empirical Bayes in high-dimensional linear models (w/ Leying Guan, Yandi Shen, Yihong Wu)

Empirical Bayes PCA in high dimensions (w/ Xinyi Zhong, Chang Su)

Group orbit estimation

Maximum likelihood for high-noise group orbit estimation and single-particle cryo-EM (w/ Roy Lederman, Yi Sun, Tianhao Wang, Sheng Xu)

Likelihood landscape and maximum likelihood estimation for the discrete orbit recovery model (w/ Yi Sun, Tianhao Wang, Yihong Wu)

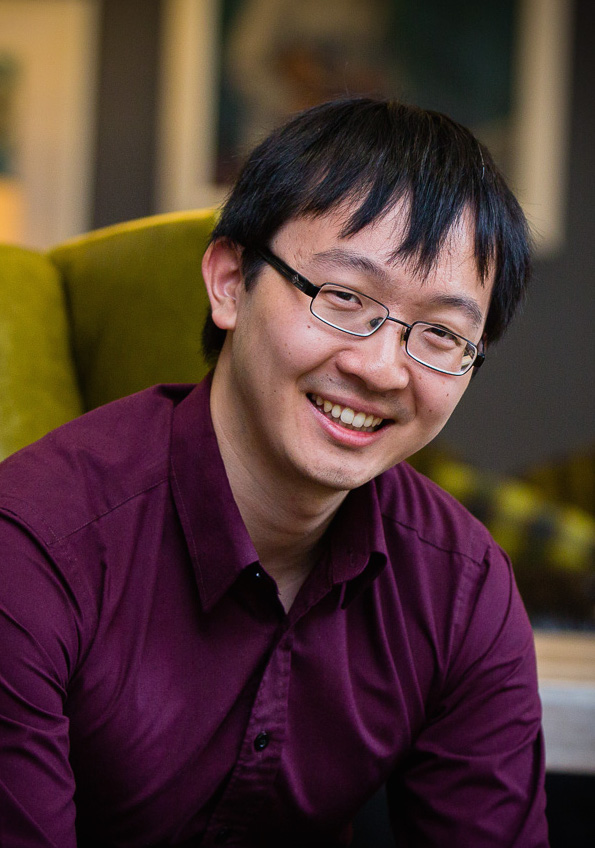

contact

Department of Statistics and Data Science

24 Hillhouse Avenue

New Haven, CT 06511

zhou.fan@yale.edu